A pattern keeps showing up. A SaaS company that has been running successfully for years, sometimes a decade or more, gets a call from a major customer. The customer's risk team has reviewed the company's security posture and determined that the colocation provider's SOC 2 report is no longer sufficient. They want the company's own SOC 2 report, scoped to their application and infrastructure, not the data center's physical security.

The company starts researching. Every guide they find assumes AWS, Azure, or GCP. Every compliance automation platform advertises connect your cloud account and get audit-ready in weeks. The tooling, the guides, the case studies are all built for a world where infrastructure lives in a hyperscaler. None of it maps to what this company actually runs: physical servers in a colocation facility, a self-managed network, and a security stack built on open-source tools that have been running reliably for years.

This is the guide those companies don't find. What SOC 2 readiness actually looks like when the infrastructure is bare metal, the network is self-managed, and connect your cloud account is not an option.

Why Bare Metal SOC 2 Is Different (and Where It's Not)

SOC 2 Trust Services Criteria don't prescribe a specific infrastructure model. CC6.1 requires logical and physical access controls. CC7.1 requires vulnerability identification and monitoring. CC8.1 requires change management. None of these criteria say use AWS CloudTrail or deploy a cloud-native scanner. They describe outcomes, not implementations.

This means bare metal environments are fully compatible with SOC 2. The criteria don't care whether a firewall is a security group in AWS or a dedicated appliance in a rack. They care whether access is controlled, changes are tracked, and vulnerabilities are identified and remediated.

Where things diverge is in the evidence layer. Cloud-native compliance platforms (Vanta, Drata, Secureframe) are built to pull evidence automatically from cloud APIs, identity providers like Okta, and SaaS endpoint management tools like Jamf. When the infrastructure is a set of physical servers in a colo with Active Directory, a self-hosted SIEM, and VPN-based access, those automated integrations cover less ground. The security may be equivalent or better, but the evidence collection requires more intentional design.

Cloud vs. On-Prem: Evidence Automation Gap

Cloud environments often have 50-60% of SOC 2 evidence flowing automatically through platform integrations. On-premises environments typically start closer to 20-30% automated coverage, with the rest requiring structured manual processes and deliberate evidence capture.

That gap is not a weakness. It's a design problem, and it's solvable.

What the Readiness Looks Like

SOC 2 readiness for bare metal environments follows three phases. The structure is the same as any SOC 2 audit preparation, but the content of each phase looks materially different from a cloud-first implementation.

Phase 1: Assessment (2-4 weeks)

The assessment starts with what's actually in the rack: production servers, databases, network appliances, storage systems, bastion hosts, security tooling. Every system gets inventoried, mapped, classified, and assigned an owner. Proper SOC 2 scoping is critical here, as bare metal environments often have more complex boundaries than cloud deployments.

A pattern that works well in on-premises environments is tiered asset classification:

- Tier 1 (High-risk): Production systems, internet-facing services, administrative gateways (VPN, Bastion Host, etc.). These get the most rigorous controls, the shortest patching SLAs, and the highest scanning frequency.

- Tier 2 (Medium-risk): Endpoints, management systems, internal tooling. Proportionate controls, moderate cadence.

- Tier 3 (Low Risk): Network appliances, storage devices, infrastructure that supports but doesn't directly access sensitive data. These still require baseline controls, but control implementation can be less frequent.

This tiered approach matters because SOC 2 CC7.1 requires vulnerability identification, but it doesn't prescribe a single cadence for every asset class. A risk-based model where internet-facing production servers are scanned weekly and internal network switches are reviewed annually is more defensible than a policy that claims weekly scanning across everything but fails to deliver it.

The assessment also maps existing security practices to SOC 2 criteria. In our experience, companies running bare metal infrastructure for 10+ years are often doing more real security than they realize. The firewall rules are tight. Patching happens, even if only informally documented. Access is controlled through network architecture rather than identity provider policies.

The gap is rarely you're not doing security. It's you can't prove you're doing security. We wrote a deeper breakdown of how to bridge the evidence gap between strong security practices and audit-ready documentation.

Phase 1 deliverables:

- Scoped system inventory with asset classification tiers

- Network diagrams for key access patterns. These tell us the entry points that need to be secured:

- Primary application access: how end users reach the product

- Infrastructure administration and development: how the team manages systems

- Data flow diagram for the main application, showing where sensitive data comes from, where it's stored, and where it goes

- Gap assessment mapped to SOC 2 criteria (not a generic checklist, mapped to the actual systems and architecture)

- Prioritized remediation plan with owners, tasks, and definition of done

Phase 2: Build (4-8 weeks)

The build phase closes gaps identified in the assessment and produces the artifacts that make the security program tangible and operational.

A common problem we see: the team's actual day-to-day responsibilities end up buried across policies, controls, and evidence descriptions, spread across dozens of documents with no single actionable reference. People don't know what they're supposed to do on Monday morning to maintain compliance. The Security Program Manual is how this gets solved. (If you're evaluating whether compliance automation platforms alone can solve this, the short answer is: they cover part of it, but the manual governs the rest.)

The Security Program Manual

For each of roughly 15 security domains (Vulnerability Management, Access Management, Network Security, Backup and DR, and so on), the manual creates concise operating procedures covering five elements:

Five Elements Per Security Domain

- Scope: What systems and data assets does this domain cover? Which tier are they in?

- Technology: What tools are in use, including where the process is manual?

- Evidence: What evidence is captured, how is it retained, and for how long?

- Process: What is the operating cadence? Weekly scans, quarterly reviews, annual assessments?

- People: Who owns it, who reviews it, who backs them up?

The manual becomes the heart of the program. It's the document the team actually references day to day, not the policies (which exist for the auditor).

How Domains Get Built

The most effective approach we've found is collaborative workshops, typically 1.5-2 hours each, covering one or two domains per session. Each domain moves through four stages:

- Review the domain in the manual (e.g., Vulnerability Management): align on which tools are in use, how often things run, where results go, how findings get actioned, and who owns it. These are the activities the team will actually execute. They need to be realistic, not aspirational.

- Review the corresponding policy: once the manual section is settled, the policy gets updated to match the manual, not the other way around. This is where generic templates fail. A company running bare metal with a firewall appliance, Active Directory, and VPN-based access needs policies that describe how access actually works in their environment, not policies that reference cloud provider access controls and container orchestration security.

- Review related controls: typically straightforward once the manual and policy are aligned. Controls get mapped across CC1-CC9 (the full SOC 2 Security Trust Services Criteria).

- Define evidence requirements: what evidence the auditor needs to see, and where it comes from. For example, vulnerability scanning evidence might be split into separate tests for Tier 1 systems (production, internet-facing, scanned weekly), Tier 2 (internal, scanned quarterly), and Tier 3 (devices with limitations like network appliances, verified annually).

With 15 domains and one or two per session, roughly 10 workshops cover the full program. By the time all domains are complete, the team has actual operational procedures they understand and can execute, policies that match their environment, controls that make sense for their setup, and exact evidence requirements to pass the audit.

Evidence Architecture

In a cloud environment, a compliance platform can pull IAM logs, configuration snapshots, and deployment records automatically. In a bare metal environment, the evidence architecture needs to be designed. Where do firewall rule reviews get documented? How are patching records retained? Where does the quarterly vulnerability scan output get stored?

The answer is often simpler than teams expect. A ticketing tool (JIRA, GitHub Issues, ServiceNow, etc.) that the team already uses for operational tasks can serve as the primary evidence trail. The key is adding structure: consistent fields (who requested it, who approved it, what changed, what was the outcome), timestamps, and a retention policy.

For companies using a GRC platform like Secureframe, Vanta, or Drata alongside on-premises infrastructure, the platform still adds significant value. It manages the control framework, tracks test status, stores policies, and handles evidence that can be uploaded manually. The difference is that fewer integrations fire automatically, so the team needs to understand which evidence flows in and which evidence they capture through structured processes.

On-Prem Tooling

Since bare metal environments can't rely on cloud-native monitoring, here are tools we see used successfully across on-premises environments:

| Category | Tools |

| Logging & Monitoring | Wazuh, Security Onion, Splunk, or a managed SOC service. See our logging architecture guide for design patterns. |

| Vulnerability Scanning | Wazuh (built-in vulnerability detection), Tenable, Nexpose, or managed scanning services |

| Configuration Scanning | Wazuh (CIS benchmarks), OpenSCAP, Tenable, or Nexpose |

The right fit depends on team size, budget, and existing infrastructure. This gets evaluated during the Build workshops, not prescribed upfront.

Security Posture Report

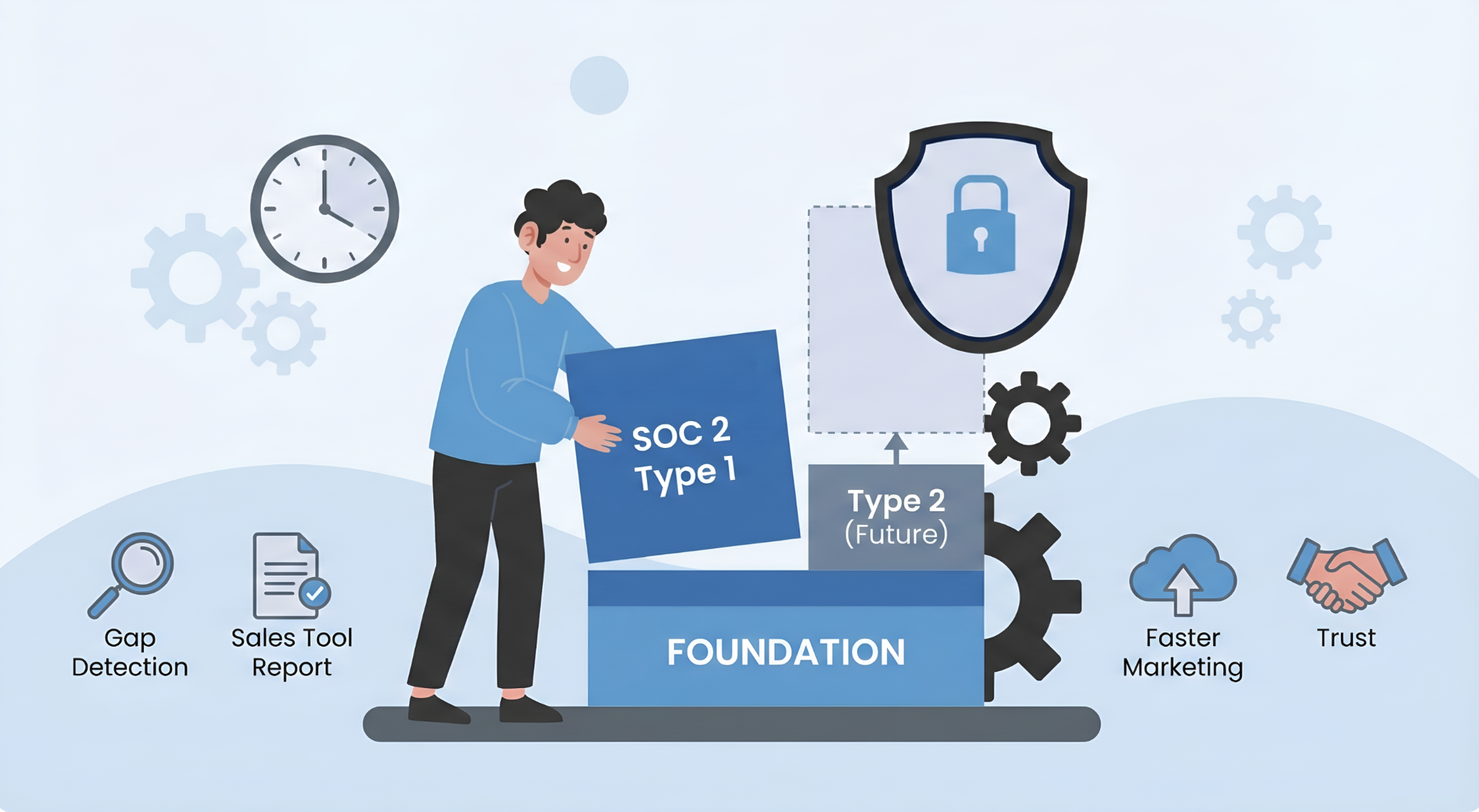

In addition to the internal Security Program Manual, the build phase can also produce an externally-facing Security Posture Report. This document explains the foundations of the security program to customers, prospects, and partners. Companies with deals in motion that can't wait months for a formal audit use it to communicate their security story while certification runs in parallel. Paired with a Trust Center (a self-service portal where buyers access policies, reports, and supporting documents), it provides a defensible answer to are you secure? before the SOC 2 report arrives.

Phase 2 Deliverables

- Security Program Manual: the internal playbook documenting how every security domain is run

- Policy and procedure package tailored to the company's real architecture and operations

- GRC platform configured with controls, tests, and evidence expectations mapped to the actual environment

- Evidence capture processes designed and documented for each domain

- Company risk assessment and vendor risk assessment

- Incident Response Plan

- Customized templates for access reviews, firewall reviews, and BCDR tabletop exercises

- Security Posture Report (optional, for companies that need to communicate security posture before the audit completes)

Phase 3: Audit Preparation and Handoff (2-4 weeks)

The final phase packages evidence and prepares the team for auditor interactions.

For a Type 1 audit (point-in-time), the evidence bar is lower than teams expect. The auditor is verifying that controls are designed and in place, not that they've been operating over a sustained period. That comes with Type 2. This means the team needs to demonstrate current state: policies are published and acknowledged, vulnerability scanning is running and producing results, access is controlled and documented, patching is happening on schedule at least once.

Here's how the key SOC 2 domains play out when the infrastructure is on-premise instead of cloud:

Physical Security

If the infrastructure lives in a colocation facility, the colo provider typically manages physical access controls under their own SOC 2 report. The company's responsibility is documenting the relationship: which physical controls the colo manages, what complementary controls the company maintains, and how the colo's SOC 2 report is reviewed annually. This is standard subservice organization (CSOCs) documentation.

Network Security

Without cloud security groups, the auditor will want to see firewall configurations, rule review processes, and evidence of network segmentation between environments (production, staging, development). On-premises environments network segmentation is architectural, enforced at the firewall level with default-deny rules, rather than relying on correctly configured security group policies on cloud.

Vulnerability Management

The tiered scanning approach described earlier maps directly to what auditors expect. They want to see a risk-based methodology: what gets scanned, how often, what happens when vulnerabilities are found, and what the remediation SLAs are. For systems that can't run scanning agents (network appliances, storage devices, legacy hardware), network-based scanning (FLAN, nmap, etc.) and manual firmware verification against vendor advisories are accepted evidence.

Access Management

Without a centralized cloud identity provider, the auditor will ask about each access path independently. VPN authentication, bastion host access, production server credentials, database access, application administrative access. Each path needs documented controls: unique user IDs, multi-factor authentication (or compensating controls), and periodic access reviews.

Phase 3 deliverables:

- Evidence package organized by control domain

- Auditor-ready documentation (system description, control narratives, evidence index)

- Preparation for auditor requests and walkthroughs

Timelines and What Drives Them

| Phase | Duration | Primary Driver |

| Assessment | 2-4 weeks | Infrastructure complexity and documentation maturity |

| Build | 4-8 weeks | Number of gaps, team availability, policy customization |

| Audit prep | 2-4 weeks | Evidence packaging, auditor scheduling |

| Total | 8-16 weeks |

Most of our on-prem clients achieve compliance within a 3-month period. What we can't easily estimate upfront is remediation effort. If the team is already implementing security best practices, it will be light. If new tools and processes need to be deployed, it takes more time.

The biggest variable is not the infrastructure model. It's the team's availability. SOC 2 readiness requires input from the people who run the systems. In companies where one person wears multiple hats (IT, security, operations, sometimes development), scheduling working sessions and completing action items takes longer than the technical work itself.

Companies that move fastest share a few traits: they assign a dedicated internal point of contact for the engagement, they commit to a regular cadence of working sessions (typically twice per week), and they treat readiness as a business priority rather than a side project that happens between operational tasks.

What Makes On-Prem SOC 2 Harder (and Easier) Than Cloud

Harder

- Evidence collection requires more intentional design. Cloud APIs generate audit trails automatically; on-prem processes need structured documentation.

- Tooling integration is less turnkey. GRC platforms connect to cloud providers and SaaS tools natively. On-premises systems require more manual evidence workflows.

- Physical security adds a domain that cloud-only companies don't touch. Even when the colo handles it, the subservice organization documentation is additional work.

Easier

- Network security is often stronger by default. Architectural segmentation with dedicated firewall appliances and VLANs is more robust than software-defined security groups that can be misconfigured with a single API call.

- The team knows the infrastructure deeply. A system administrator who has been managing the same servers for a decade can describe every access path, every backup process, and every patch cycle. The knowledge exists, it just needs to be formalized.

- There's less shadow IT, at least in the production and management networks. Cloud environments accumulate forgotten services, test instances, and abandoned resources that expand the audit scope unexpectedly. On-premises environments tend to have clearer boundaries because every system costs real rack space and power.

- Change velocity is lower. In cloud environments with continuous deployment, change management evidence is a firehose. On-premises environments with quarterly releases and documented maintenance windows produce cleaner, more manageable change records.

The Real Blocker Is Not the Infrastructure

The companies that struggle with SOC 2 readiness on bare metal are rarely struggling because of the infrastructure. They struggle because the entire compliance automation market has told them they need to connect their cloud account to get started, and when that doesn't apply, they assume the process must be fundamentally different or harder.

It isn't. SOC 2 criteria are infrastructure-agnostic. The process is: understand what you have, assess it against the criteria, close gaps, document how it runs, and prove it to an auditor. The tools and evidence collection methods vary by infrastructure model, but the structure is the same.

What makes the difference is having policies and processes that match how the company actually operates, not generic templates that describe a cloud-native startup. When a company running bare metal in a colocation facility gets policies that reference their actual firewall appliance, their actual bastion host architecture, their actual patching process with its actual cadence, the program becomes something the team can run and the auditor can verify.

The alternative, policies written for a generic cloud environment and retrofitted onto on-premises infrastructure, is the most common source of audit friction we see. It creates a gap between what we say we do and what we do, and auditors are trained to find exactly that gap.

Running on bare metal and need SOC 2?

We'll walk through your environment, identify the gaps, and scope a readiness plan built around your real architecture and operations. The goal is an effective security program where compliance is a byproduct, not a separate project.

Request a Free Assessment →Frequently Asked Questions

Can you get SOC 2 if your infrastructure is bare metal or on-premise instead of cloud?

Yes. SOC 2 Trust Services Criteria are infrastructure-agnostic. They describe outcomes (access is controlled, vulnerabilities are identified, changes are tracked), not specific implementations. The criteria don't care whether a firewall is a security group in AWS or a dedicated appliance in a rack. Bare metal and colocation environments are fully compatible with SOC 2.

What tools can replace cloud-native scanners for SOC 2 on bare metal?

For logging and SIEM, Wazuh and Security Onion are widely used across on-premises environments. For vulnerability scanning, Wazuh (built-in vulnerability detection), Tenable, and Nexpose work well. For configuration baselines, Wazuh (CIS benchmarks) and OpenSCAP provide automated scanning. These tools produce the same evidence auditors expect from cloud-native alternatives.

How does SOC 2 scoping differ for bare metal vs. cloud infrastructure?

The biggest difference is that bare metal environments add physical security as a domain (typically handled via the colocation provider's own SOC 2 report) and require documentation of each access path independently (VPN, bastion host, production servers, databases), since there's no centralized cloud identity provider. The tiered asset classification approach, where systems are classified by risk level with proportionate controls, is particularly important for on-prem environments.

How long does SOC 2 readiness take for an on-premise SaaS company?

Most on-prem engagements achieve compliance within a 3-month period: 2-4 weeks for assessment, 4-8 weeks for build (closing gaps, writing policies, configuring the GRC platform), and 2-4 weeks for audit preparation. The biggest variable is team availability, not infrastructure complexity. Companies where one person wears multiple hats (IT, security, operations) take longer because scheduling workshops and completing action items competes with operational responsibilities.

Do compliance automation platforms like Vanta, Drata, or Secureframe work with on-premise infrastructure?

They provide value but cover less ground automatically. Cloud environments typically see 50-60% of evidence flowing through automated platform integrations. On-premises environments start closer to 20-30% automated coverage. The platform still manages the control framework, tracks test status, stores policies, and handles manually uploaded evidence. The difference is that more evidence requires structured manual processes and deliberate capture, which is why the Security Program Manual becomes essential for on-prem teams.

What does an auditor focus on differently for bare metal SOC 2 vs. cloud?

Auditors pay particular attention to four areas where on-premises infrastructure diverges from cloud assumptions: physical security (colocation subservice organization documentation), network security (firewall configurations and segmentation evidence instead of cloud security groups), vulnerability management (tiered scanning with agent-based and network-based approaches for devices that can't run agents), and access management (documenting each access path independently since there's no centralized identity provider).

Ready to Build Your SOC 2 Roadmap?

Our free, no-obligation assessment will give you a clear, actionable plan to achieve compliance.

.png?width=155&height=82&name=Arrow%205%20(1).png)